DDocker & Kubernetes : Service IP and the Service Type

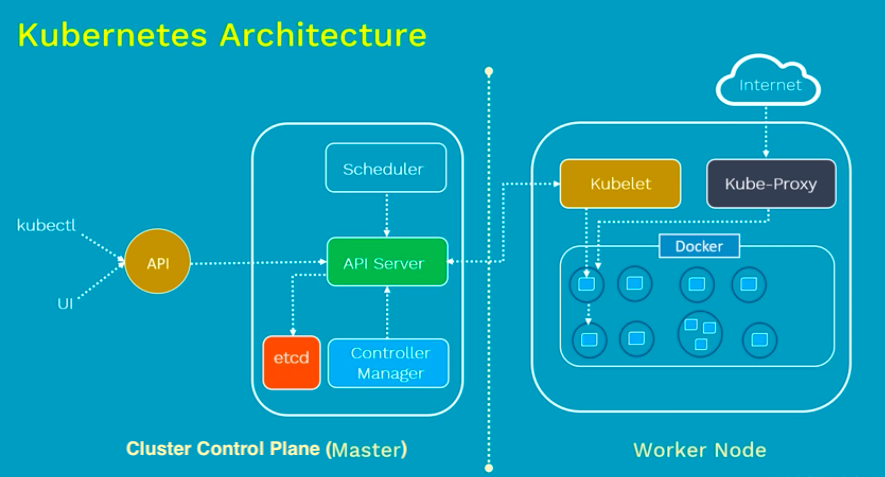

From Kubernetes Architecture made easy | Kubernetes Tutorial

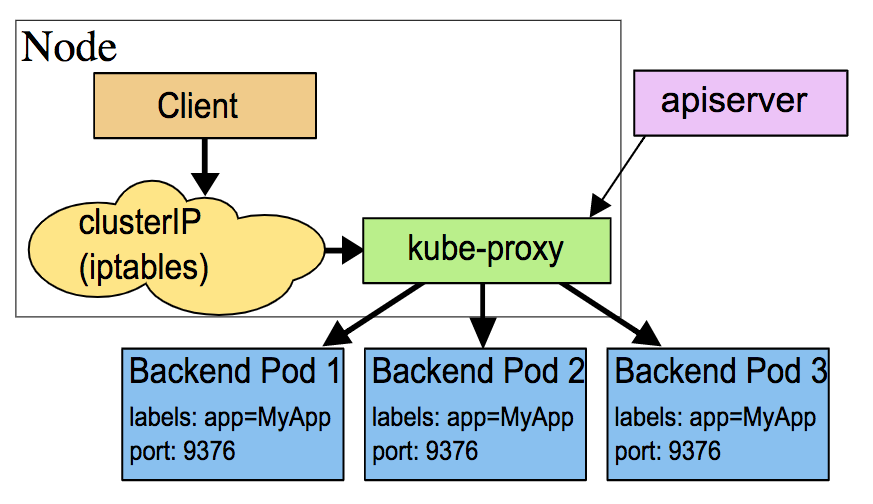

Kubernetes service is a way to expose an application running on Pods. In Kubernetes, a Service is an abstraction which defines a logical set of Pods.

Kubernetes gives Pods their own IP addresses and a single DNS name for a set of Pods, and can load-balance across them. With Service, it is very easy to manage load balancing configuration.

Deployment vs Service:

- deployment - responsible for keeping a set of pods running.

- service - responsible for enabling network access to a set of pods.

Based on Using Source IP, in this post, we'll do the following:

- Expose a simple application through various types of Services

- Understand how each Service type handles source IP NAT

- Understand the tradeoffs involved in preserving source IP

Terminology:

From Kubernetes - Services Explained

- Source NAT:

replacing the source IP on a packet with the IP address of a node.

- Type=ClusterIP : Packets sent to Services are never source NAT’d by default.

- Type=NodePort : Packets sent to Services are source NAT'd by default.

- Type=LoadBalancer : Packets sent to Services are source NAT'd by default

- Destination NAT: replacing the destination IP on a packet with the IP address of a Pod.

- VIP: a virtual IP address, such as the one assigned to every Service in Kubernetes.

Start minikub:.

$ minikube start minikube 1.10.1 is available! Download it: https://github.com/kubernetes/minikube/releases/tag/v1.10.1 To disable this notice, run: 'minikube config set WantUpdateNotification false' minikube v1.9.2 on Darwin 10.13.3 KUBECONFIG=/Users/kihyuckhong/.kube/config Automatically selected the hyperkit driver. Other choices: docker, virtualbox Starting control plane node m01 in cluster minikube Creating hyperkit VM (CPUs=2, Memory=2200MB, Disk=20000MB) ... Preparing Kubernetes v1.18.0 on Docker 19.03.8 ... Enabling addons: default-storageclass, storage-provisioner Done! kubectl is now configured to use "minikube" $ kubectl cluster-info Kubernetes master is running at https://192.168.64.5:8443 KubeDNS is running at https://192.168.64.5:8443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

Create a deployment for a small nginx webserver that echoes back the source IP of requests it receives through an HTTP header:

$ kubectl create deployment source-ip-app --image=k8s.gcr.io/echoserver:1.4 deployment.apps/source-ip-app created

There are three ways to route external traffic into our cluster:

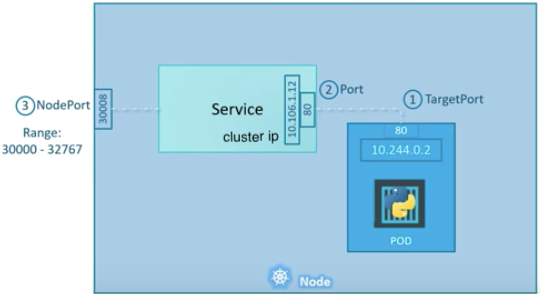

- Using Kubernetes proxy and ClusterIP: The default Kubernetes ServiceType is ClusterIP, which exposes the Service on a cluster-internal IP.

To reach the ClusterIP from an external source, we can open a Kubernetes proxy via

kubectlbetween the external source and the cluster. When the proxy is up, we're directly connected to the cluster, and we can use the internal IP (ClusterIP) for that Service. This is usually only used for development. - Exposing services as NodePort: Declaring a Service as NodePort exposes it on each Node's IP at a static port (referred to as the NodePort).

We can then access the Service from outside the cluster by requesting

<NodeIp>:<NodePort>. Every service we deploy as NodePort will be exposed in its own port, on every Node. This can also be used for production, albeit with some limitations. - Exposing services as LoadBalancer: Declaring a Service as LoadBalancer exposes it externally, using a cloud provider's load balancer solution. The cloud provider will provision a load balancer for the Service, and map it to its automatically assigned NodePort. This is the most widely used method in production environments.

Packets sent to ClusterIP from within the cluster are never source NAT'd (replacing the source IP with node IP) if we're running kube-proxy in iptables mode, (the default).

We can query the kube-proxy mode by fetching http://localhost:10249/proxyMode on the node where kube-proxy is running. In our case, the node is minikube:

$ kubectl get nodes NAME STATUS ROLES AGE VERSION minikube Ready master 93m v1.18.0 $ minikube ip 192.168.64.5 $ curl $(minikube ip):10249/proxyMode iptables

We can test source IP preservation by creating a Service over the source IP app:

$ kubectl expose deployment source-ip-app \ --name=clusterip --port=80 --target-port=8080 service/clusterip exposed

It created a clusterip service for an source-ip-app deployment, which serves on port 80 and connects to the containers on port 8080:

$ kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE clusterip ClusterIP 10.98.115.121 <none> 80/TCP 2m33s kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 113m $ kubectl describe svc clusterip Name: clusterip Namespace: default Labels: app=source-ip-app Annotations: <none> Selector: app=source-ip-app Type: ClusterIP IP: 10.98.115.121 Port:80/TCP TargetPort: 8080/TCP Endpoints: 172.17.0.4:8080 Session Affinity: None Events:

Let's try to hit the ClusterIP from a pod (busybox) in the same cluster (minikube):

$ kubectl run busybox -it --image=busybox --restart=Never --rm

If you don't see a command prompt, try pressing enter.

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: sit0@NONE: <NOARP> mtu 1480 qdisc noop qlen 1000

link/sit 0.0.0.0 brd 0.0.0.0

15: eth0@if16: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:07 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.7/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # wget -qO - 10.98.115.121

CLIENT VALUES:

client_address=172.17.0.7

command=GET

real path=/

query=nil

request_version=1.1

request_uri=http://10.98.115.121:8080/

SERVER VALUES:

server_version=nginx: 1.10.0 - lua: 10001

HEADERS RECEIVED:

connection=close

host=10.98.115.121

user-agent=Wget

BODY:

-no body in request-/ #

Note that the client_address(172.17.0.7) is always the client pod's IP address(172.17.0.7).

Note that the wegt issued from the busybox (client pod), so this IP is the source IP.

Let's delete the service:

$ kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE clusterip ClusterIP 10.96.164.56 <none> 80/TCP 60m kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 170m $ kubectl delete svc clusterip service "clusterip" deleted

Packets sent to Services with Type=NodePort are source NAT'd (replacing the source IP with node IP) by default.

We can test this by creating a NodePort Service:

$ kubectl expose deployment source-ip-app --name=nodeport \ --port=80 --target-port=8080 --type=NodePort service/nodeport exposed

It created a nodeport service for an source-ip-app deployment, which serves on port 80 and connects to the containers on port 8080:

$ kubectl get svc nodeport NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE nodeport NodePort 10.110.255.127 <none> 80:31968/TCP 2m19s $ kubectl get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME minikube Ready master 7h8m v1.18.0 192.168.64.5 <none> Buildroot 2019.02.10 4.19.107 docker://19.3.8 $ kubectl get ep NAME ENDPOINTS AGE kubernetes 192.168.64.5:8443 7h19m nodeport 172.17.0.4:8080 13m

Now we may want to try reaching the Service from outside the cluster through the node port allocated above using curl $node:$NODEPORT.

$ minikube ip 192.168.64.5 $ curl -s $(minikube ip):31968 CLIENT VALUES: client_address=172.17.0.1 command=GET real path=/ query=nil request_version=1.1 request_uri=http://192.168.64.5:8080/ SERVER VALUES: server_version=nginx: 1.10.0 - lua: 10001 HEADERS RECEIVED: accept=*/* host=192.168.64.5:31968 user-agent=curl/7.54.0 BODY: -no body in request

Note that this is not a the correct client IP, from the one node on which the endpoint pod is running.

To preserve the original source IP address and to make kube-proxy only proxies proxy requests to local endpoints, we need to set the service.spec.externalTrafficPolicy field as follows:

$ kubectl patch svc nodeport -p '{"spec":{"externalTrafficPolicy":"Local"}}'

service/nodeport patched

$ kubectl describe svc nodeport

Name: nodeport

Namespace: default

Labels: app=source-ip-app

Annotations: <none>

Selector: app=source-ip-app

Type: NodePort

IP: 10.110.255.127

Port: <unset> 80/TCP

TargetPort: 8080/TCP

NodePort: <unset> 31968/TCP

Endpoints: 172.17.0.4:8080

Session Affinity: None

External Traffic Policy: Local

Events: <none>

$ curl -s $(minikube ip):31968 client_address=192.168.64.1 command=GET real path=/ query=nil request_version=1.1 request_uri=http://192.168.64.5:8080/ SERVER VALUES: server_version=nginx: 1.10.0 - lua: 10001 HEADERS RECEIVED: accept=*/* host=192.168.64.5:31968 user-agent=curl/7.54.0 BODY: -no body in request-

Now, we got a reply (client_address=192.168.64.1), with the right client IP, from the one node on which the endpoint pod is running.

Let's delete the service:

$ kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 7h34m nodeport NodePort 10.110.255.127 <none> 80:31968/TCP 28m $ kubectl delete svc nodeport service "nodeport" deleted

Packets sent to Services with Type=LoadBalancer are source NAT’d by default, because all schedulable Kubernetes nodes in the Ready state are eligible for load-balanced traffic. So if packets arrive at a node without an endpoint, the system proxies it to a node with an endpoint, replacing the source IP on the packet with the IP of the node.

Let's expose the source-ip-app through a load balancer:

$ kubectl expose deployment source-ip-app \ --name=loadbalancer --port=80 --target-port=8080 --type=LoadBalancer service/loadbalancer exposed $ kubectl get svc loadbalancer NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE loadbalancer LoadBalancer 10.102.19.151 <pending> 80:31720/TCP 77s $ kubectl describe svc loadbalancer Name: loadbalancer Namespace: default Labels: app=source-ip-app Annotations: <none> Selector: app=source-ip-app Type: LoadBalancer IP: 10.102.19.151 Port: <unset> 80/TCP TargetPort: 8080/TCP NodePort: <unset> 31720/TCP Endpoints: 172.17.0.4:8080 Session Affinity: None External Traffic Policy: Cluster Events: <none>

On cloud providers that support load balancers, an external IP address would be provisioned to access the Service. On Minikube, the LoadBalancer type makes the Service accessible through the

minikube service command:

$ minikube service loadbalancer |-----------|--------------|-------------|---------------------------| | NAMESPACE | NAME | TARGET PORT | URL | |-----------|--------------|-------------|---------------------------| | default | loadbalancer | 80 | http://192.168.64.5:31720 | |-----------|--------------|-------------|---------------------------| 🎉 Opening service default/loadbalancer in default browser...

The browser output looks like this:

CLIENT VALUES: client_address=172.17.0.1 command=GET real path=/ query=nil request_version=1.1 request_uri=http://192.168.64.5:8080/ SERVER VALUES: server_version=nginx: 1.10.0 - lua: 10001 HEADERS RECEIVED: accept=text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9 accept-encoding=gzip, deflate accept-language=en-US,en;q=0.9,ko-KR;q=0.8,ko;q=0.7,zh-CN;q=0.6,zh;q=0.5,ja;q=0.4 connection=keep-alive host=192.168.64.5:31720 upgrade-insecure-requests=1 user-agent=Mozilla/5.0 (Macintosh; Intel Mac OS X 10_13_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36 BODY: -no body in request-

Note that the output we get on the browser from minikube service loadbalancer command is the same as with the curl http://192.168.64.5:31720

The same we've done for the NodePort, to preserve the original source IP address, \ we need to set the service.spec.externalTrafficPolicy field as follows:

$ kubectl patch svc loadbalancer -p '{"spec":{"externalTrafficPolicy":"Local"}}'

service/loadbalancer patched

$ kubectl get svc loadbalancer -o yaml | grep -i healthCheckNodePort

healthCheckNodePort: 31467

$ kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

source-ip-app-5b85689676-h7mtm 1/1 Running 0 7h22m 172.17.0.4 minikube <none> <none>

$ curl $(minikube ip):31467/healthz

{

"service": {

"namespace": "default",

"name": "loadbalancer"

},

"localEndpoints": 1

$ minikube service loadbalancer |-----------|--------------|-------------|---------------------------| | NAMESPACE | NAME | TARGET PORT | URL | |-----------|--------------|-------------|---------------------------| | default | loadbalancer | 80 | http://192.168.64.5:31720 | |-----------|--------------|-------------|---------------------------| 🎉 Opening service default/loadbalancer in default browser...

The new browser output looks like this:

CLIENT VALUES: client_address=192.168.64.1 command=GET real path=/ query=nil request_version=1.1 request_uri=http://192.168.64.5:8080/ SERVER VALUES: server_version=nginx: 1.10.0 - lua: 10001 HEADERS RECEIVED: accept=text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9 accept-encoding=gzip, deflate accept-language=en-US,en;q=0.9,ko-KR;q=0.8,ko;q=0.7,zh-CN;q=0.6,zh;q=0.5,ja;q=0.4 connection=keep-alive host=192.168.64.5:31720 upgrade-insecure-requests=1 user-agent=Mozilla/5.0 (Macintosh; Intel Mac OS X 10_13_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36 BODY: -no body in request-

- Using Source IP

- Service

- kubectl Cheat Sheet

- Getting external traffic into Kubernetes – ClusterIp, NodePort, LoadBalancer, and Ingress

- Hello Minikube

Docker & K8s

- Docker install on Amazon Linux AMI

- Docker install on EC2 Ubuntu 14.04

- Docker container vs Virtual Machine

- Docker install on Ubuntu 14.04

- Docker Hello World Application

- Nginx image - share/copy files, Dockerfile

- Working with Docker images : brief introduction

- Docker image and container via docker commands (search, pull, run, ps, restart, attach, and rm)

- More on docker run command (docker run -it, docker run --rm, etc.)

- Docker Networks - Bridge Driver Network

- Docker Persistent Storage

- File sharing between host and container (docker run -d -p -v)

- Linking containers and volume for datastore

- Dockerfile - Build Docker images automatically I - FROM, MAINTAINER, and build context

- Dockerfile - Build Docker images automatically II - revisiting FROM, MAINTAINER, build context, and caching

- Dockerfile - Build Docker images automatically III - RUN

- Dockerfile - Build Docker images automatically IV - CMD

- Dockerfile - Build Docker images automatically V - WORKDIR, ENV, ADD, and ENTRYPOINT

- Docker - Apache Tomcat

- Docker - NodeJS

- Docker - NodeJS with hostname

- Docker Compose - NodeJS with MongoDB

- Docker - Prometheus and Grafana with Docker-compose

- Docker - StatsD/Graphite/Grafana

- Docker - Deploying a Java EE JBoss/WildFly Application on AWS Elastic Beanstalk Using Docker Containers

- Docker : NodeJS with GCP Kubernetes Engine

- Docker : Jenkins Multibranch Pipeline with Jenkinsfile and Github

- Docker : Jenkins Master and Slave

- Docker - ELK : ElasticSearch, Logstash, and Kibana

- Docker - ELK 7.6 : Elasticsearch on Centos 7

- Docker - ELK 7.6 : Filebeat on Centos 7

- Docker - ELK 7.6 : Logstash on Centos 7

- Docker - ELK 7.6 : Kibana on Centos 7

- Docker - ELK 7.6 : Elastic Stack with Docker Compose

- Docker - Deploy Elastic Cloud on Kubernetes (ECK) via Elasticsearch operator on minikube

- Docker - Deploy Elastic Stack via Helm on minikube

- Docker Compose - A gentle introduction with WordPress

- Docker Compose - MySQL

- MEAN Stack app on Docker containers : micro services

- MEAN Stack app on Docker containers : micro services via docker-compose

- Docker Compose - Hashicorp's Vault and Consul Part A (install vault, unsealing, static secrets, and policies)

- Docker Compose - Hashicorp's Vault and Consul Part B (EaaS, dynamic secrets, leases, and revocation)

- Docker Compose - Hashicorp's Vault and Consul Part C (Consul)

- Docker Compose with two containers - Flask REST API service container and an Apache server container

- Docker compose : Nginx reverse proxy with multiple containers

- Docker & Kubernetes : Envoy - Getting started

- Docker & Kubernetes : Envoy - Front Proxy

- Docker & Kubernetes : Ambassador - Envoy API Gateway on Kubernetes

- Docker Packer

- Docker Cheat Sheet

- Docker Q & A #1

- Kubernetes Q & A - Part I

- Kubernetes Q & A - Part II

- Docker - Run a React app in a docker

- Docker - Run a React app in a docker II (snapshot app with nginx)

- Docker - NodeJS and MySQL app with React in a docker

- Docker - Step by Step NodeJS and MySQL app with React - I

- Installing LAMP via puppet on Docker

- Docker install via Puppet

- Nginx Docker install via Ansible

- Apache Hadoop CDH 5.8 Install with QuickStarts Docker

- Docker - Deploying Flask app to ECS

- Docker Compose - Deploying WordPress to AWS

- Docker - WordPress Deploy to ECS with Docker-Compose (ECS-CLI EC2 type)

- Docker - WordPress Deploy to ECS with Docker-Compose (ECS-CLI Fargate type)

- Docker - ECS Fargate

- Docker - AWS ECS service discovery with Flask and Redis

- Docker & Kubernetes : minikube

- Docker & Kubernetes 2 : minikube Django with Postgres - persistent volume

- Docker & Kubernetes 3 : minikube Django with Redis and Celery

- Docker & Kubernetes 4 : Django with RDS via AWS Kops

- Docker & Kubernetes : Kops on AWS

- Docker & Kubernetes : Ingress controller on AWS with Kops

- Docker & Kubernetes : HashiCorp's Vault and Consul on minikube

- Docker & Kubernetes : HashiCorp's Vault and Consul - Auto-unseal using Transit Secrets Engine

- Docker & Kubernetes : Persistent Volumes & Persistent Volumes Claims - hostPath and annotations

- Docker & Kubernetes : Persistent Volumes - Dynamic volume provisioning

- Docker & Kubernetes : DaemonSet

- Docker & Kubernetes : Secrets

- Docker & Kubernetes : kubectl command

- Docker & Kubernetes : Assign a Kubernetes Pod to a particular node in a Kubernetes cluster

- Docker & Kubernetes : Configure a Pod to Use a ConfigMap

- AWS : EKS (Elastic Container Service for Kubernetes)

- Docker & Kubernetes : Run a React app in a minikube

- Docker & Kubernetes : Minikube install on AWS EC2

- Docker & Kubernetes : Cassandra with a StatefulSet

- Docker & Kubernetes : Terraform and AWS EKS

- Docker & Kubernetes : Pods and Service definitions

- Docker & Kubernetes : Service IP and the Service Type

- Docker & Kubernetes : Kubernetes DNS with Pods and Services

- Docker & Kubernetes : Headless service and discovering pods

- Docker & Kubernetes : Scaling and Updating application

- Docker & Kubernetes : Horizontal pod autoscaler on minikubes

- Docker & Kubernetes : From a monolithic app to micro services on GCP Kubernetes

- Docker & Kubernetes : Rolling updates

- Docker & Kubernetes : Deployments to GKE (Rolling update, Canary and Blue-green deployments)

- Docker & Kubernetes : Slack Chat Bot with NodeJS on GCP Kubernetes

- Docker & Kubernetes : Continuous Delivery with Jenkins Multibranch Pipeline for Dev, Canary, and Production Environments on GCP Kubernetes

- Docker & Kubernetes : NodePort vs LoadBalancer vs Ingress

- Docker & Kubernetes : MongoDB / MongoExpress on Minikube

- Docker & Kubernetes : Load Testing with Locust on GCP Kubernetes

- Docker & Kubernetes : MongoDB with StatefulSets on GCP Kubernetes Engine

- Docker & Kubernetes : Nginx Ingress Controller on Minikube

- Docker & Kubernetes : Setting up Ingress with NGINX Controller on Minikube (Mac)

- Docker & Kubernetes : Nginx Ingress Controller for Dashboard service on Minikube

- Docker & Kubernetes : Nginx Ingress Controller on GCP Kubernetes

- Docker & Kubernetes : Kubernetes Ingress with AWS ALB Ingress Controller in EKS

- Docker & Kubernetes : Setting up a private cluster on GCP Kubernetes

- Docker & Kubernetes : Kubernetes Namespaces (default, kube-public, kube-system) and switching namespaces (kubens)

- Docker & Kubernetes : StatefulSets on minikube

- Docker & Kubernetes : RBAC

- Docker & Kubernetes Service Account, RBAC, and IAM

- Docker & Kubernetes - Kubernetes Service Account, RBAC, IAM with EKS ALB, Part 1

- Docker & Kubernetes : Helm Chart

- Docker & Kubernetes : My first Helm deploy

- Docker & Kubernetes : Readiness and Liveness Probes

- Docker & Kubernetes : Helm chart repository with Github pages

- Docker & Kubernetes : Deploying WordPress and MariaDB with Ingress to Minikube using Helm Chart

- Docker & Kubernetes : Deploying WordPress and MariaDB to AWS using Helm 2 Chart

- Docker & Kubernetes : Deploying WordPress and MariaDB to AWS using Helm 3 Chart

- Docker & Kubernetes : Helm Chart for Node/Express and MySQL with Ingress

- Docker & Kubernetes : Deploy Prometheus and Grafana using Helm and Prometheus Operator - Monitoring Kubernetes node resources out of the box

- Docker & Kubernetes : Deploy Prometheus and Grafana using kube-prometheus-stack Helm Chart

- Docker & Kubernetes : Istio (service mesh) sidecar proxy on GCP Kubernetes

- Docker & Kubernetes : Istio on EKS

- Docker & Kubernetes : Istio on Minikube with AWS EC2 for Bookinfo Application

- Docker & Kubernetes : Deploying .NET Core app to Kubernetes Engine and configuring its traffic managed by Istio (Part I)

- Docker & Kubernetes : Deploying .NET Core app to Kubernetes Engine and configuring its traffic managed by Istio (Part II - Prometheus, Grafana, pin a service, split traffic, and inject faults)

- Docker & Kubernetes : Helm Package Manager with MySQL on GCP Kubernetes Engine

- Docker & Kubernetes : Deploying Memcached on Kubernetes Engine

- Docker & Kubernetes : EKS Control Plane (API server) Metrics with Prometheus

- Docker & Kubernetes : Spinnaker on EKS with Halyard

- Docker & Kubernetes : Continuous Delivery Pipelines with Spinnaker and Kubernetes Engine

- Docker & Kubernetes : Multi-node Local Kubernetes cluster : Kubeadm-dind (docker-in-docker)

- Docker & Kubernetes : Multi-node Local Kubernetes cluster : Kubeadm-kind (k8s-in-docker)

- Docker & Kubernetes : nodeSelector, nodeAffinity, taints/tolerations, pod affinity and anti-affinity - Assigning Pods to Nodes

- Docker & Kubernetes : Jenkins-X on EKS

- Docker & Kubernetes : ArgoCD App of Apps with Heml on Kubernetes

- Docker & Kubernetes : ArgoCD on Kubernetes cluster

- Docker & Kubernetes : GitOps with ArgoCD for Continuous Delivery to Kubernetes clusters (minikube) - guestbook

Ph.D. / Golden Gate Ave, San Francisco / Seoul National Univ / Carnegie Mellon / UC Berkeley / DevOps / Deep Learning / Visualization